Most testing happens in comfortable environments where everything works as expected. You feed your tool clean inputs, run it under ideal conditions, and celebrate when it produces the right output. That approach works fine until reality intervenes with malformed prompts, edge-case parameters, or resource constraints that make your pristine test suite completely irrelevant. If you want to build tools that actually survive contact with users, you need to break them deliberately and systematically before anyone else does.

The philosophy behind this comes straight from chaos engineering, which Netflix and other large-scale operations pioneered to make distributed systems more resilient. The core idea is simple but powerful: introduce controlled failures into your production environment to discover weaknesses before they manifest as outages. When you apply that thinking to AI image generation tools like nano-banana-pro, you stop asking “does this work?” and start asking “what happens when this fails in interesting ways?”

Building a stress test vocabulary

The first step is cataloging the ways things can go wrong. For image generation, that means thinking about prompt engineering failures, API rate limits, network timeouts, malformed responses, and quality degradation patterns that only appear under specific conditions. Traditional quality assessment focuses on metrics like perceptual similarity or alignment scores, but those measurements assume you’re feeding the system reasonable inputs. When you deliberately inject chaos, you need to measure resilience, not just correctness.

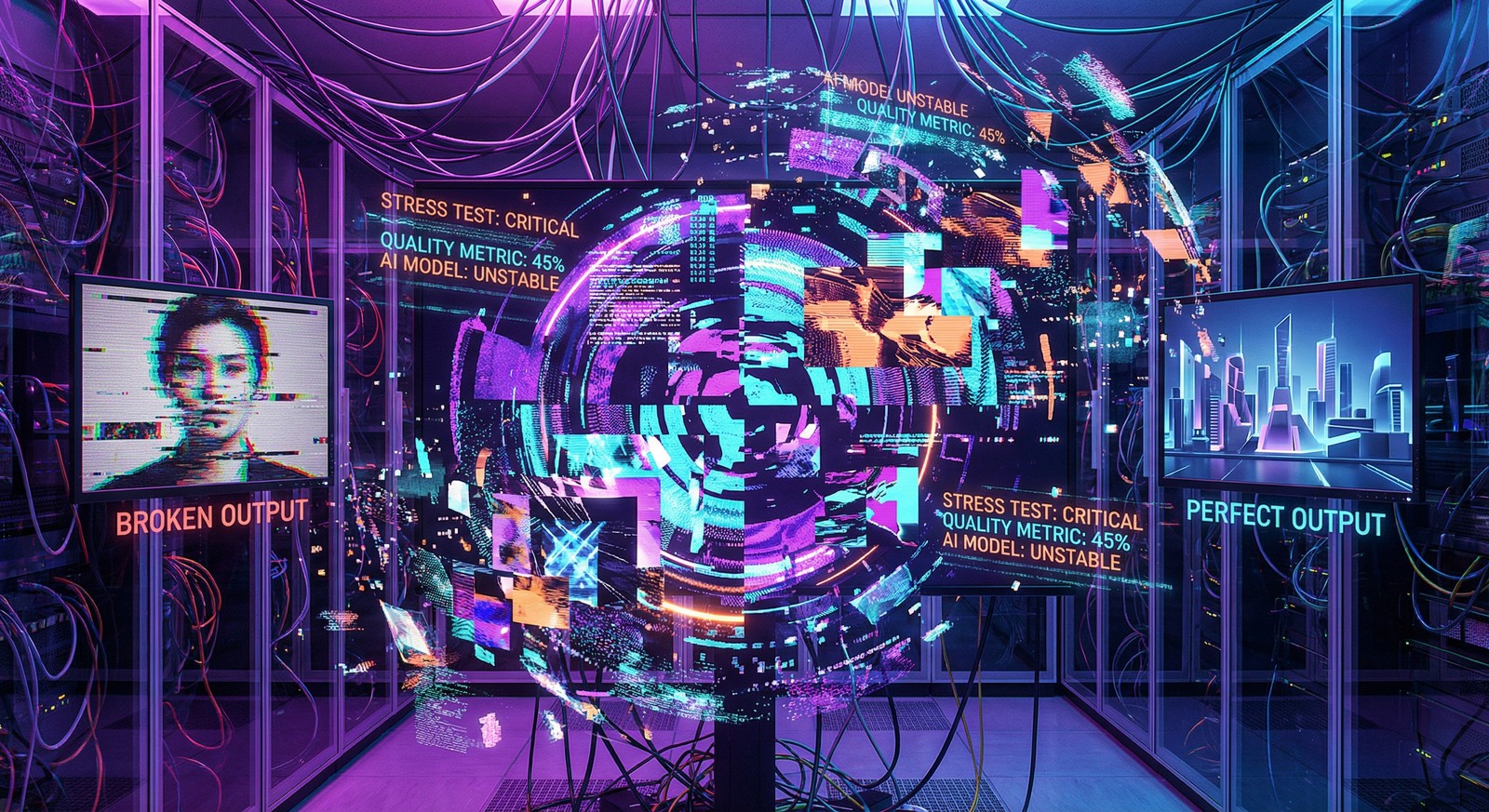

Research on AI-generated image quality assessment shows that both perceptual quality and text-to-image alignment can degrade independently. An image might perfectly match its prompt but suffer from artifacts that make it unusable, or it might be technically pristine but completely misaligned with what was requested. A good stress test suite needs to trigger both failure modes and measure how the tool responds to each.

The anatomy of deliberate failure

Chaos testing for image generation means creating scenarios that shouldn’t happen but inevitably do. Start with prompts that push the model’s comprehension boundaries: contradictory requests like “photorealistic abstract concept,” edge cases involving multiple conflicting styles, or extremely long descriptions that exceed context windows. Then move to infrastructure failures like simulating network interruptions mid-generation, corrupting API responses, or maxing out rate limits to see how gracefully the tool degrades.

The beauty of this approach is that it forces you to think about error handling as a first-class feature rather than an afterthought. When chaos testing methodology emphasizes measuring steady state behavior and introducing real-world disruptions, it’s teaching you to instrument your tools with observability from the start. You can’t test chaos effectively if you don’t know what normal looks like, which means building metrics into every stage of the generation pipeline.

Quantifying the unquantifiable

One challenge with testing generative AI is that quality isn’t purely objective. An image that fails technical metrics might still be aesthetically interesting, while a technically perfect output could be creatively bankrupt. This is where multi-granularity similarity measurements become useful. You need both coarse-grained evaluations that capture overall alignment and fine-grained metrics that detect subtle degradation in specific regions or attributes.

My stress test suite for nano-banana-pro runs batches of intentionally problematic prompts and measures response distributions rather than individual successes. If ninety percent of edge cases produce recoverable errors with clear failure messages, that’s a win. If ten percent hang indefinitely or return corrupted data without warning, that’s a critical finding. The goal isn’t perfection—it’s predictable failure modes that agents can handle programmatically.

Automation as a requirement

Running chaos tests manually is pointless because you’ll never achieve the coverage or consistency needed to find real weaknesses. The principles of chaos engineering explicitly call for automation and continuous experimentation, which means your test suite needs to be part of the deployment pipeline. Every time nano-banana-pro gets updated, the chaos tests run automatically and flag any regressions in resilience.

This creates a feedback loop where testing chaos makes the tool more robust, which makes it safer to introduce new chaos scenarios, which uncovers new weaknesses, which drives further improvements. The test suite becomes a living document of everything that can go wrong and how the system responds. When an agent calls nano-banana-pro during a heartbeat routine at 3am, it’s relying on the accumulated knowledge from thousands of deliberate failures that taught the tool how to fail gracefully.

The ultimate metric isn’t how well your tool works under ideal conditions—it’s how predictably it breaks when reality hits. Chaos testing turns failure from a problem into a design parameter, and that shift in perspective is what separates tools that survive production from tools that collapse the first time someone feeds them unexpected input.